The Canadian English Language Proficiency Index Program-General (CELPIP-G) Test aims to assess the general English language functional proficiency of individuals for adapting to life in Canada. The distinctiveness of the CELPIP-G Test, in contrast to other leading proficiency tests in the industry, arises from its design and use for Canadian immigration purposes. Apart from IELTS (International English Language Testing System), it is the only test that is accepted by the Department of Citizenship and Immigration Canada (CIC) for permanent resident and citizenship purposes (“Citizenship and Immigration Canada”, n.d.). In addition, the test uses the English variety spoken in Canada. Accordingly, it exposes individuals to Canadian English rather than other varieties of English used in other proficiency tests. Using Bachman and Palmer’s qualities of tests (1996) as a framework, I will critically examine the purpose, strengths, and limitations of the reading section of the CELPIP-G Test.

Background and Users

Paragon Testing Enterprises, a subsidiary of the University of British Columbia (UBC), launched the CELPIP-G test in response to the success of its placement test known as the Language Proficiency Index (LPI; L. Barrows, personal communication, October 29, 2015). The LPI measured beginning university and college level English reading and writing skills only. Therefore, there was an increasing demand to assess all four language skills of reading, writing, speaking and listening for general and academic purposes. In order to meet this demand, the CELPIP-G Test was developed in 2002 (L. Burrows, personal communication, October 29, 2015). In the same year, the test was recognized by CIC as a proof of general language proficiency for the Federal Skilled Workers Class; in 2012, it was accepted for the streams of the Canadian Experience Class of Immigration, and in 2013, it was established for all current federal economic immigration programs (Federal Skilled Worker Program, CEC, and Federal Skilled Trades Program), the Provincial Nominee Program, and the Business Program (L.Barrows, personal communication, October 29, 2015). In addition to the CELPIP-G Test, an academic English language proficiency test,

CELPIP-Academic, was introduced in 2005 to fulfill language requirements for admission purposes in universities giving it parity with proficiency tests like IELTS, TOFEL (Test of English as a Foreign Language), and MELAB (Michigan English Language Assessment Battery). However, the CELPIP-Academic was retired in August, 2015 (L. Barrows, personal communication, October 29, 2015). As a result of limitations identified in the validity and reliability research, a revised version of the CELPIP- G was introduced in April 2014, which presented changes to the structure and scoring template, time allotment to different sections, and the number of items in each skill appropriate to everyday situations (McKenzie, 2014).

Although the test was originally developed as a general and academic proficiency test, it is now generally used for the purpose of immigration in Canada. In addition to Citizenship and Immigration Canada, the test results are used by Canadian immigration associations and consultants for directing applicants for immigration to Canada and also by professional associations and employers who need evidence of English language proficiency of their members and prospective employees (“CELPIP General”, n.d.)

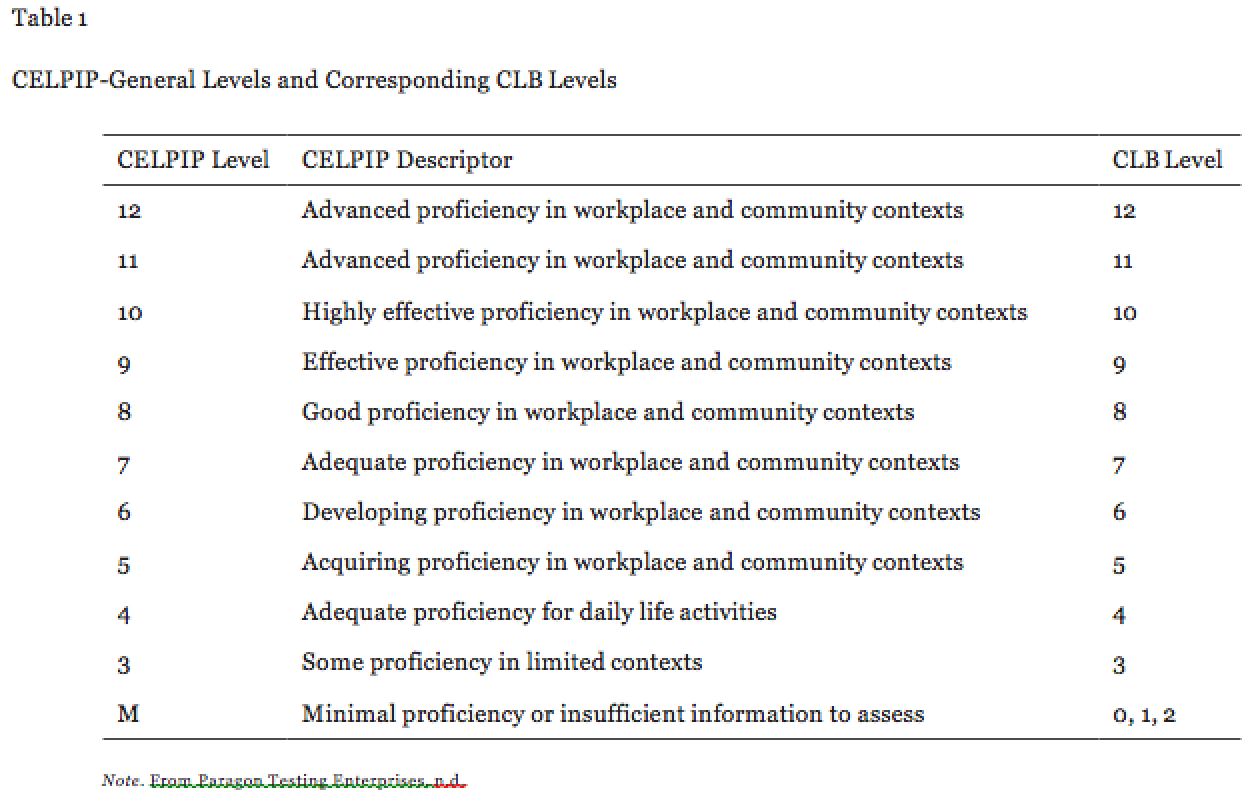

In the previous version of CELPIP-G test, the scores of an individual needed to be transformed to the Canadian Language Benchmark (CLB) criterion. This proved to be problematic as the CLB had a scale of 1–9+ whereas the CELPIP-G was scored on a scale of 1–5 (high-low). The revised version CELPIP-G is now aligned with the CLB and the scores are given on a scale of 1-9+ (McKenzie, 2014). The following table shows each CELPIP-G level and its corresponding description.

Purpose

The purpose of CELPIP-G is to assess an individual’s general language ability or functional competency to communicate in everyday and workplace situations. The test takers include both English-first and English as second language speakers, and the test provides a score based on the CLB 2000 (Canadian Language Benchmarks). Aligned with its purpose, the test contains “Canadian English Language … that accurately assesses listening, reading, writing, and speaking skills in typical everyday situations” (“CELPIP- G Brochure”, n.d.). The construct of functional reading proficiency in English, the focus of this review, measures the test taker’s ability to “engage with, understand, interpret, and make use of written English texts to achieve day-to-day and general workplace communicative functions” (Wu & Stone, 2015, p. 5).

Test Format and Scoring of the Reading Section

Offering plausible benefits of being computer-delivered, the CELPIP-G Test is completed in one three hour sitting. The reading section, which is one hour long, is devised in a multiple choice item format for the feasibility of quick marking, low expense, and objectivity (Weir, 1990, 1993). Individuals get one point for correct answers and a zero for incorrect answers. All test items are checked automatically by the computer with a pre-specified answer key. The reading tasks of the test are outlined to elicit responses reflecting the understanding of regular day-to-day and work-related written English, and the sections become gradually harder with the progression of the test (Wu & Stone, 2015). The multiple choice item formats of all sections of the reading test, apart from section three, have four distracters for one key. Section three has five distracters for one key. The first section, Reading Correspondence, is based on social or work related email communication and entails an understanding of the underlying “sociolinguistic and pragmatic” features of written communication along with vocabulary, sentence structure, and grammar. Analyzing graphical information (presented in an email) is the focus of Reading to Apply a Diagram section. The third section, Reading for Information, presents factual information for interpretation. Finally, in the form of an “editorial genre”, the fourth section, Reading for Viewpoints, requires test takers to understand critical and abstract ideas (Wu & Stone, 2015). In addition, in order to ensure item quality, which is a standard procedure for tests of this nature, some unscored items are included in the reading test. These occur in any four sections of the reading test and are indistinguishable from the other items (“CELPIP- General Test format and scoring”, n.d.).

Validity and Reliability

Validity and reliability are the two most critical traits of language assessment, especially for large-scale tests that involve important consequences for a wide range of people. (Bachman & Palmer, 1996). Validity is established through “a long-term process of accumulating, evaluating, interpreting, conveying, and refining evidence from multiple sources about diverse aspects of the uses of an assessment to fulfill particular purposes to its guiding construct/(s)” (Cumming, 2013, p. 1), and reliability is “considered to be a function of the consistency of scores from one set of tests and test tasks to another” (Bachman & Palmer, 1996, p.19). Research by Paragon Testing Enterprise provides substantial evidence on the reliability and validity of the CELPIP-G Test (Chen, 2014; Wu, 2011; Wu, et al., 2012). Focusing on aspects such as measurement error, gender bias, convergent and criterion validity, construct validity, and dimensionality, these studies concluded that despite some irregularities, the CELPIP-G test meets the standards of the industry and is analogous to other leading proficiency tests. Other studies (Wu & Stone, 2015; Wu, Stone, & Lui, 2015), on the other hand, raised questions about some aspects of the validity and reliability. The research conducted by Wu et al. (2012) looked at the suitability and effect of categorizing test takers into the different proficiency levels which determine their eligibility for immigration. The proficiency levels are established by three cut scores or thresholds that classify test takers into four proficiency levels: no proficiency, basic proficiency, moderate proficiency, and high proficiency. The study found the reading section to be less consistent and less accurate at the high proficiency level. This suggested the developers add items to the reading section at the third cut point to distinguish between high proficient and moderate proficient test takers. The revised scoring system of CELPIP –G accounted for this problem. A study carried out by Wu and Stone (2015) that focused on the reading test taking strategies of individuals, also provides evidence for validity. They found that the test takers gave priority to comprehension skills in analyzing tests rather than “test-wiseness”, a factor that would undermine construct validity.

As presented on its website, Paragon Testing Enterprise asserts that its mission is “to develop and produce innovative, valid, fair, accurate, and high quality assessments through collaboration with the unit’s members and with its many stakeholders”. (“About research at Paragon”, n.d.). Moreover, it “is dedicated to sharing the research on its English language testing program with users of its tests”. (“About research at Paragon”, n.d.). Accordingly it provides information regarding the items, users and test takers, sample tests, procedure, and outlines appropriate uses and warnings about misuses (“Paragon Testing Enterprise”, n.d.).

Limitations

An important feature of language tests mentioned by Bachman and Palmer (1996) is test fairness. “Fairness implies that every test taker has the opportunity to prepare for the test and is informed about the general nature and content of the test, as appropriate to the purpose of the test.” (Code of Fair Testing Practices in Education, 2004). The information regarding the Canadian English spoken variety is limited; it does not give test takers a clear description of the “Canadian variety” included in the test, which brings construct validity into question. The official CELPIP test website mentions “Canadian English is the English variety spoken in Canada. It contains elements of British English and American English in its vocabulary, as well as many distinctive Canadianisms…both British and American English spellings are accepted on the CELPIP Test.” (What is Canadian English?, n.d.).

The link provided by Paragon Testing Enterprise to a Wikipedia source is mostly theoretical in nature, and although it gives some examples of Canadian English Language norms, it poses difficulty for test takers to relate the information to test preparation. The available sample test is also not enough to gain a definite insight of the nature of Canadian variety of English. Therefore, the purpose of the test becomes a concerning factor as to what is being assessed: general reading comprehension skills, familiarity with Canadian English, or both?

The second drawback of the test is its use of multiple choice items as the only form of assessment in the reading section which raises issues of content validity. Although multiple choice items have the advantage of convenience and high reliability, it is “an unrealistic task, as in real life one is rarely presented with several alternatives from which to make a choice to signal understanding” (Weir, 1990, p. 44). In many cases it might lead to guessing, or the test taker can be trained to choose the correct answer. It also tends to place more emphasis on a test takers’ familiarity with the linguistic system (Shin, 2012). It is, therefore, advisable to “use more than one test method for testing any ability” (Alderson, Clapham, & Wall, 1995, p. 45) because “by using a variety of different task types, the test is far more likely to provide a balanced assessment” (Buck, 2001, p. 153). Given the purpose of the CELPIP-G Test, assessing general English functional competency, items “which appear integrative, authentic, communicative, and pragmatic from real world language use situations” (Shin, 2012, p. 239) can be included.

Finally, an important issue that emerges from the discussion is the washback effects of the test. As the score of the CELPIP-G is often used in high-stakes situations, such as immigration, it exerts considerable influence on how test-takers prepare and take the test, the feedback they receive, and the decisions made on the basis of the test (Bachman & Palmer, 1996). So far, one study (Wu, et al., 2012) outlined unintended consequences in terms classification consistency and accuracy, but other areas of washback effect have not been investigated. However, one potential direct effect of the test is the test taker’s investment of time and money to buy materials or enroll into programs to get a clear idea of the “Canadiniasms” the CELPIP-General aims to represent.

Conclusion

To conclude, despite the doubts expressed regarding general construct specification and item choice in the reading section, the CELPIP-G test is important, especially in Canada for assessing English language skills for permanent resident and immigration purposes. In comparison to IELTS, the alternative test accepted by CIC, CELPIP-G can be considered more feasible as it is cheaper. The computer-based delivery makes it less time consuming, and the results are also available in a shorter time. International students and non- resident workers living in Canada who intend to become permanent residents can take the CELPIP-G Test to provide proof of English language ability. However, issues related to washback effects and fairness need to be revisited to account for test validity and reliability as the use of CELPIP-G Test expands in Canada.

References

About research at Paragon. (n.d.). Paragon Testing Enterprise. Retrieved from https://www.paragontesting.ca/research/

Alderson, J. C., Clapham, C., & Wall, D. (1995). Language test construction and evaluation. Cambridge: Cambridge University Press.

Bachman, F.L., & Palmer, A.S. (1996). Language testing in practice. Oxford: Oxford University Press.

Buck, G. (2001). Assessing Listening. Cambridge, UK: Cambridge University Press.

CELPIP- G Brochure. (n.d.). Paragon Testing Enterprise. Retrieved from https://www.celpiptest.ca/wp-content/uploads/2015/09/CELPIP-G-Brochure-WEB.pdf

CELPIP- General Test format and scoring. (n.d.). Paragon Testing Enterprise.Retrieved from https://www.celpiptest.ca/about-celpip-g/test-format-and-scoring/

CELPIP General. (n.d.). Paragon Testing Enterprise. Retrieved from https://www.paragontesting.ca/english-language-tests/celpip-test/

Citizenship and Immigration Canada.(n.d.). Retrieved from http://www.cic.gc.ca/english/informationapplications/guides/CIT0002ETOC.asp#CIT0002E4

Code of fair testing practices in education. (2004). Washington, DC: Joint Committee on Testing Practices, American Psychological Association. Retrieved from http://www.apa.org/science/programs/testing//fair-code.aspx.

Cumming, A. (2013). Validation of language assessments. In C. Chapelle (Ed.)The Encyclopedia of Applied Linguistics. Malden, MA: Wiley-Blackwell. DOI:10.1002/9781405198431.wbeal1242

Mckenzie, T. (2014, April 15). Recent changes to the CELPIP test. Retrieved from https://www.immigrationnation.ca/blog.aspx?entry=1892

Paragon Testing Enterprise. (n.d.).Retrieved from https://www.celpiptest.ca/about-celpip-g/ Shin, D. (2012). Language assessment for immigration and citizenship. In G. Fulcher & F. Davidson (Eds.), The Routledge handbook of language testing. (pp.237-248). New York, USA: Routledge.

Weir, C. J. (1990). Communicative language testing. New York: Prentice Hall.

Weir, C. J. (1993). Understanding and developing language tests. New York: Prentice Hall. What is Canadian English? (n.d.). Paragon Testing Enterprise. Retrieved fromhttps://www.celpiptest.ca/faq/

Wu, A. D. (2011).Report on the evaluation of the CELPIP-G Test scores. Paragon Testing Enterprises. Retrieved from https://www.paragontesting.ca/wp-content/uploads/2012/06/Report-on-Evaluation-of-CELPIP-G-Test-Scores.pdf

Wu, A. D., & Stone, J. E. (2015). Validation through understanding test-taking strategies: An illustration with the CELPIP-General reading pilot test using structural equation modeling. Journal of Psychoeducational Assessment. Advance online publication. DOI: 10.1177/0734282915608575

Wu, A. D., Stone, J. E., & Lui, Y. (2015). Developing a validity argument through abductive reasoning with an empirical demonstration of the latent class analysis. International Journal of Testing. Advance online publication DOI:10.1080/15305058.2015.1057826

Wu, A. D., Wehrung, D., & Zumbo, B. D. (2012).The validation of the CELPIP-G Test for Canadian immigrants: Classification consistency and accuracy. Paper presented at the 2012 Annual meeting of the American Educational Research Association, Vancouver, Canada. Retrieved from https://www.paragontesting.ca/wp-content/uploads/2012/06/The-Validation-of-the-CELPIP-G-Test-for-Canadian-Immigration.pdf

Author Bio

Shayla Ahmad holds an M.A. in Applied Linguistics. She teaches EAP at George Brown College and English Communication Skills at Humber College. Her professional interests include assessment and second language acquisition. shayla.ahmad@hotmail.com

I work with many ‘highly skilled’ migrants. Doctors in fact. And I can assure you that the requirement for an English language test is entirely necessary. I think you’ll find you have to sit the IELTS test if applying to work in the UK or US as well.